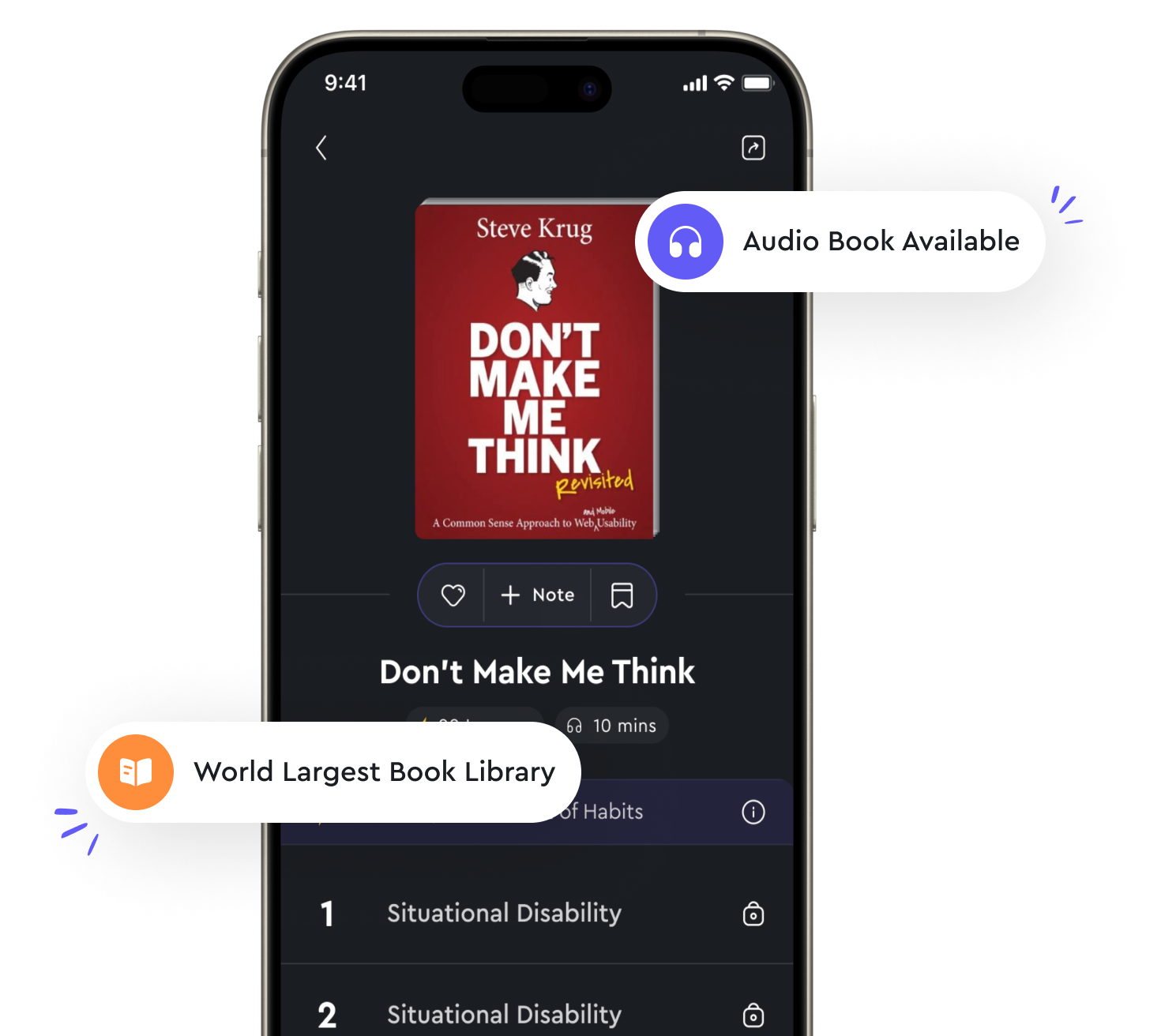

Audio available in app

Dimensionality reduction techniques help in simplifying complex data from "summary" of Machine Learning by Stephen Marsland

Dimensionality reduction techniques are essential tools in the field of machine learning as they help in simplifying complex data. These techniques work by reducing the number of random variables under consideration, which in turn reduces the computational complexity of the problem. In many real-world applications, data is often high-dimensional, making it challenging to analyze and interpret. By reducing the dimensionality of the data, we can remove noise, redundant information, and irrelevant features, leading to a more concise and meaningful representation of the data. One common approach to dimensionality reduction is principal component analysis (PCA), which aims to find the orthogonal directions in which the data varies the most. By projecting the data onto these principal components, we can effectively capture the essential information in a lower-dimensional space. This not only simplifies the data but also helps in visualizing and understanding the underlying structure of the data. Another popular technique for dimensionality reduction is t-distributed stochastic neighbor embedding (t-SNE), which is particularly useful for visualizing high-dimensional data in a two- or three-dimensional space. t-SNE focuses on preserving the local structure of the data, making it ideal for tasks such as clustering and classification. By reducing the dimensionality of the data using t-SNE, we can gain insights into the relationships between data points and identify patterns that may not be apparent in the original high-dimensional space.- Dimensionality reduction techniques play a crucial role in simplifying complex data by transforming it into a more manageable form without losing important information. By reducing the dimensionality of the data, we can improve the performance of machine learning algorithms, reduce overfitting, and speed up computation. These techniques are essential tools for data preprocessing and exploratory data analysis, enabling us to extract meaningful insights from high-dimensional data effectively.