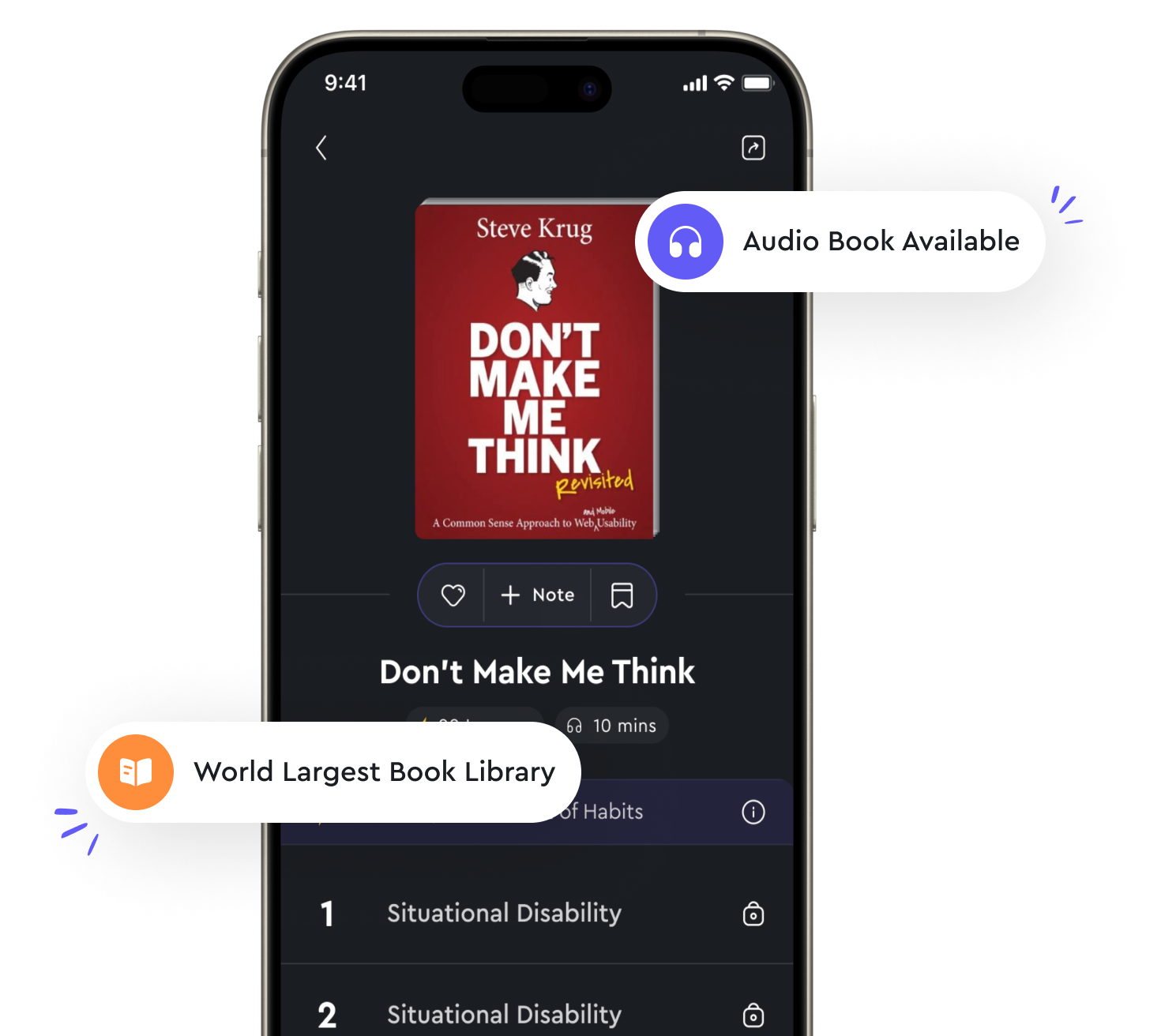

Audio available in app

Decision trees are used for classification and regression tasks from "summary" of Machine Learning by Stephen Marsland

Decision trees are versatile tools that can be used for both classification and regression tasks in machine learning. In classification, decision trees are used to divide the feature space into regions that correspond to different classes or categories. The decision tree algorithm recursively splits the data based on the values of input features, creating a tree-like structure where each internal node represents a decision based on a feature, and each leaf node represents a class label. This process continues until a stopping criterion is met, such as reaching a maximum tree depth or having all instances in a node belong to the same class. On the other hand, decision trees can also be used for regression tasks, where the goal is to predict a continuous target variable. In regression trees, the algorithm recursively partitions the feature space into regions based on the values of input features, fitting a simple model (e. g., constant value) to the target variable in each region. The prediction for a new instance is then made by traversing the tree from the root node to a leaf node and using the model fitted to that region. Decision trees have several advantages that make them popular in machine learning applications. One of the main advantages is their simplicity and interpretability, as the resulting tree structure is easy to understand and visualize. Decision trees also handle both numerical and categorical data, making them suitable for a wide range of datasets. Additionally, decision trees are non-parametric models, meaning that they make minimal assumptions about the underlying data distribution, which can be beneficial when dealing with complex or nonlinear relationships. However, decision trees are prone to overfitting, especially when the tree depth is too large or the number of features is high. Overfitting occurs when the model captures noise in the training data rather than the underlying pattern, leading to poor generalization performance on unseen data. To mitigate overfitting, techniques such as pruning, setting a maximum tree depth, or using ensemble methods like random forests can be employed.- Decision trees are powerful tools that can be used for both classification and regression tasks in machine learning. Their simplicity, interpretability, and ability to handle different types of data make them a popular choice for various applications. However, careful tuning and regularization are necessary to prevent overfitting and improve generalization performance.