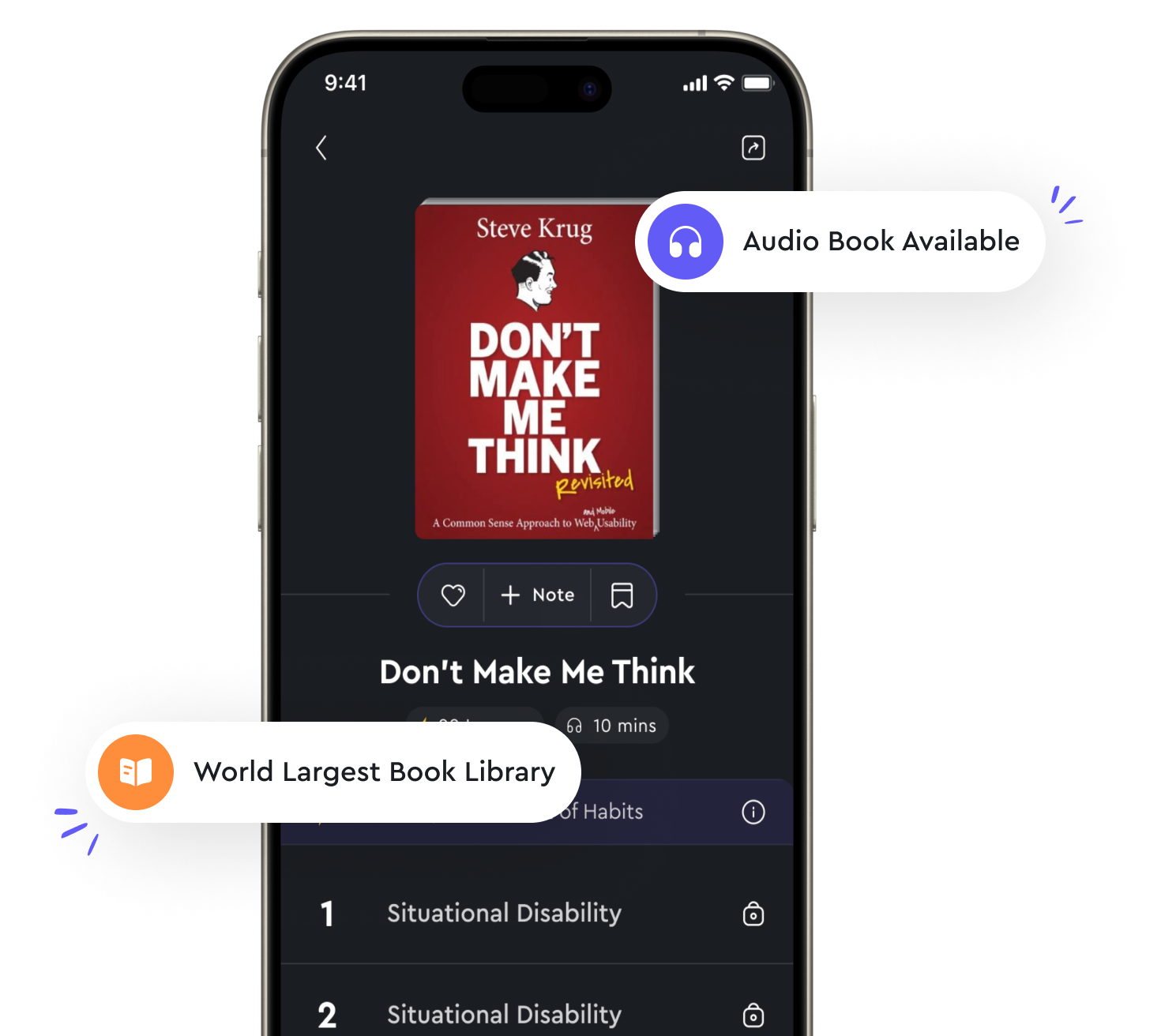

Audio available in app

Dimensionality reduction techniques can simplify complex data sets from "summary" of Introduction to Machine Learning with Python by Andreas C. Müller,Sarah Guido

Dimensionality reduction techniques are a set of unsupervised learning methods that are used to reduce the number of features in a dataset. These techniques are particularly useful when dealing with high-dimensional data, as they can help simplify complex data sets and make them more manageable for machine learning algorithms. By reducing the number of features, dimensionality reduction techniques can help improve the performance of machine learning models by reducing the risk of overfitting and speeding up training times. One common method of dimensionality reduction is Principal Component Analysis (PCA), which works by identifying the directions in which the data varies the most and projecting the data onto a lower-dimensional subspace defined by these directions. By doing so, PCA can help capture the most important patterns in the data while discarding less relevant information. Another popular technique is t-distributed Stochastic Neighbor Embedding (t-SNE), which is used for visualizing high-dimensional data in a lower-dimensional space. t-SNE works by modeling the similarities between data points in high-dimensional space and then finding a lower-dimensional representation that preserves these similarities as much as possible. In addition to PCA and t-SNE, there are many other dimensionality reduction techniques available, each with its strengths and weaknesses. Some techniques, such as Linear Discriminant Analysis (LDA), are specifically designed for feature extraction in supervised learning tasks, while others, like Isomap and Locally Linear Embedding (LLE), are better suited for capturing the non-linear relationships in the data. Choosing the right dimensionality reduction technique depends on the specific characteristics of the data and the goals of the analysis.- Dimensionality reduction techniques play a crucial role in simplifying complex data sets and making them more amenable to analysis. By reducing the number of features, these techniques can improve the interpretability of the data, reduce computational costs, and enhance the performance of machine learning models. As such, dimensionality reduction is an essential tool in the data scientist's toolkit, allowing them to extract valuable insights from high-dimensional data and build more accurate and efficient machine learning models.

Similar Posts

Strong connectivity property graphs

A property graph is strongly connected if for every pair of vertices u and v, there is a directed path from u to v and a direct...

Libraries provide additional functionality

Libraries are collections of modules that add specific functionality to Python. They are essentially pre-written code that can ...

AI has the potential to address pressing social issues

AI possesses a transformative power that transcends mere technological advancement. It has the capability to tackle complex soc...

Celebrating achievements boosts confidence

When individuals achieve success, it is essential to celebrate their accomplishments. This celebration serves as a form of reco...

Data is the foundation of machine learning algorithms

Data plays a crucial role in the development and success of machine learning algorithms. Without data, machine learning algorit...

Neural networks mimic the human brain to solve complex problems

Neural networks are computational models inspired by the structure and function of the human brain. They consist of interconnec...