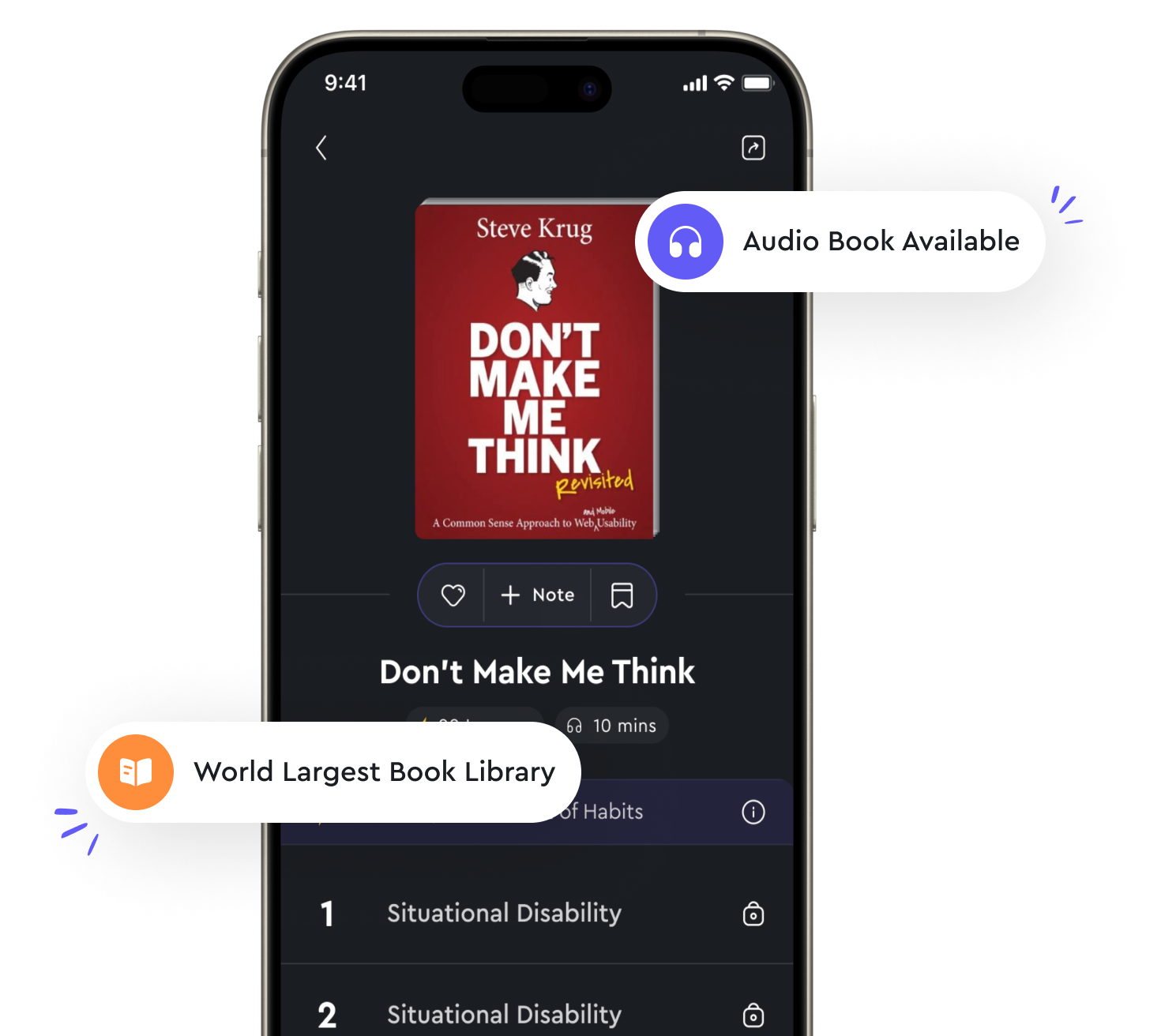

Audio available in app

Decision trees are a popular algorithm for classification and regression tasks from "summary" of Data Science for Business by Foster Provost,Tom Fawcett

Decision trees are widely used in data science for both classification and regression tasks due to their simplicity and interpretability. They are essentially flowcharts that help guide decisions based on input features. A decision tree starts at the root node and splits the data into subsets based on the values of certain features. This process continues recursively until a stopping criterion is met, such as reaching a maximum tree depth or having data points that belong to the same class. One of the main reasons decision trees are popular is their simplicity. They are easy to understand and interpret, even for non-experts in data science. A decision tree can be visualized as a tree structure, with branches representing decisions and leaves representing outcomes. This makes it easy to explain the reasoning behind a particular prediction or classification. Another advantage of decision trees is that they can handle both numerical and categorical data without the need for preprocessing. This can save time and effort in data preparation, as the algorithm can work with raw data directly. Additionally, decision trees are robust to outliers and missing values, as they can handle these cases naturally during the splitting process. In terms of performance, decision trees can be quite powerful when tuned properly. They have the flexibility to capture complex relationships in the data, making them suitable for a wide range of tasks. However, they can also be prone to overfitting if not properly regularized. Techniques such as pruning, setting minimum sample sizes for splits, and limiting tree depth can help prevent overfitting and improve generalization performance.- Decision trees are a versatile and effective algorithm for classification and regression tasks. They strike a good balance between simplicity and performance, making them a popular choice for many data scientists and analysts. By understanding the principles behind decision trees and how to tune them effectively, one can leverage their power for a variety of predictive modeling tasks.

Similar Posts

Maximum flows model network capacities

The concept of maximum flows model network capacities is a fundamental idea in graph theory. In a network, edges are associated...

Comments document code for others to understand

When writing code, it is crucial to remember that it is not just for the eyes of the person who wrote it. Others will invariabl...

Support vector machines find the optimal hyperplane to separate data points

Support vector machines (SVMs) are a powerful tool in machine learning for binary classification tasks. The main idea behind SV...

Building momentum

Building momentum is about getting the ball rolling. It's about creating a positive feedback loop that propels you forward. Thi...

Intervalcensored data needs unique analysis techniques

Interval-censored data presents a unique challenge in statistical analysis due to the nature of the data being partially observ...

Using data to drive decisions

Decisions aren't just made in a vacuum. They're made with information. And not just any information, but the right information....

Neural networks are a powerful tool for modeling complex relationships in data

Neural networks have gained popularity in data science due to their ability to capture complex relationships within data. These...

Feature selection improves the efficiency of algorithms

Feature selection is a crucial step in machine learning that can significantly improve the efficiency of algorithms. By selecti...

Classification models are used to categorize data into classes

Classification models are used to categorize data into classes based on their features. These models are essential in data scie...